How to get a high TOEFL speaking score – with John Healy

If you’re looking to get a high TOEFL speaking score, your first port of call should be the platform My Speaking Score.

Just recently, I interviewed the Founder and CEO of My Speaking Score, John Healy.

Before I share the interview with you, I will provide a short account of John’s journey from owner of an IT consultancy business to harnessing Generative AI to supercharge language education. After this biography, I’ll also give a brief overview of My Speaking Score and also an automated scoring system called SpeechRater.

A little bit about John Healy

It was in 2003 that John Healy sold his fledgling IT consultant business. He headed to South Korea to teach English for a year.

Such is the unpredictability of life, John stayed in South Korea for 15 years.

It’s safe to say that John had an eventful time in East Asia. He grew to adore the South Korean people, learned to speak the language (badly, according to John) and moved all over. John taught in small schools and universities, and also volunteered in churches. He also taught Business English to executives.

Between 2009-13, John did his master’s in TESOL.

His online courses TOEFL Clear Strategies and Tactics, Study With It, TOEFL Turbo Tactics, and many others, have helped learners from over 100 countries develop English skills and achieve high TOEFL scores.

John’s proudest moment in South Korea was marrying “the one”. He became a dad to Kayla in 2018. These days, he lives with his family in Florence, Italy.

John Healy has found his place in Florence and is clearly a man on a mission - to help students get a high TOEFL speaking score.

What is My Speaking Score?

My Speaking Score is Software-as-a-Service (SaaS) that specialises in online English language training and assessment.

The My Speaking Score platform uses the ETS SpeechRater scoring engine to provide test takers with instant data-heavy feedback on their TOEFL Speaking.

If you head onto myspeakingscore.com, you will be able to do the following:

- Select any TOEFL Speaking practice test of your choice

- Voice record your responses to task questions using your microphone

- Submit the responses you would like grading by SpeechRater

- Assess your SpeechRater score data

- Repeat

What is SpeechRater?

SpeechRater is a tool that analyses and rates spontaneously spoken English in real-time. It is designed to help people improve their speaking skills by providing feedback on various aspects of their speech, such as pronunciation, fluency, and vocabulary.

SpeechRater uses advanced artificial intelligence algorithms to analyse spoken language and provide feedback in the form of scores and suggestions for improvement. The scoring engine is fully integrated into My Speaking Score.

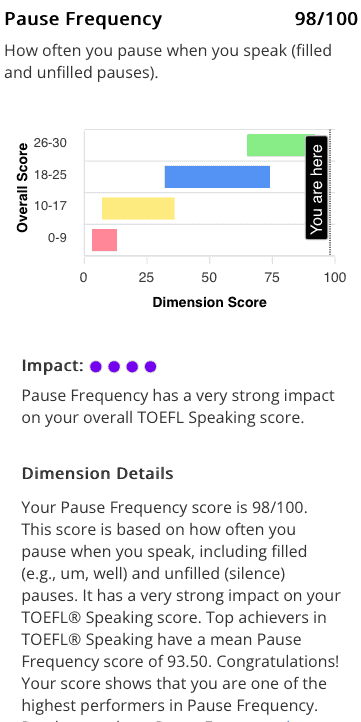

Check out this page to find out more about the 12 SpeechRater indicators of a candidate’s speech. These dimensions include vocabulary depth, distribution of pauses and speaking rate.

Be sure to check out this SpeechRater report as well to see how the My Speaking Score platform and SpeechRater complement one another.

Interview

1. In a nutshell, My Speaking Score is a powerful speech scoring engine built specifically for TOEFL Speaking teachers. Can you reveal a little more about what inspired you to produce this platform, and how does it work exactly?

“I’m a trained EFL teacher from way back in 2003. I moved to South Korea ostensibly for a year, and ended up staying 15. I fell in love with teaching and did pretty much everything a teacher can do in South Korea. And in 2018, I was working at Korea University, considered by many to be the finest school in the country. My baby daughter, Kayla, was also born that year. So, it was a big turning point in my life and I decided to move my operation online.

I was doing a lot of the same work that so many teachers do now. Language instruction in various domains, and also bits and pieces in the test prep world. And I was doing a lot of TOEFL and in South Korea, like many countries - Poland included - TOEFL is extremely important. It's a status accomplishment that demonstrates to the world that your English proficiency is a certain level. Moreover, it's a requirement to gain access into organisations, to study abroad and so on.

So I was doing a lot of that high stakes test prep work. One of the problems that I was encountering time and time again was being able to deliver fast feedback. In the My Speaking Score ecosystem, we call this fast feedback. This refers to being able to give speakers instant assessment diagnostic information about their speaking abilities.

Typically, in the old days, the way that students received such information was through real time interaction with a teacher. If the teacher was effective, he or she would identify some patterns in a student’s output and offer some type of targeted correction, and they'd work on that specific aspect. In the test prep context, and the TOEFL speaking context particularly, the teacher would use the ETS* rubrics to guesstimate a student’s total speaking score. That was a big pain point for me because the feedback was often delayed. Sure, in a scheduled hour-long class, teachers hear a lot from their students. But more often than not students need feedback when they're not online - when they're not with their teacher. I was finding that if the feedback was delayed, there was just no real effective intervention, no effective repair that was taking place.

In a nutshell, the speech recognition service, SpeechRater, had actually been around for about a decade when I went online. But it was being more widely understood to be a part of the TOEFL speaking assessment methodology only around five years ago. And certainly ETS was using SpeechRater, and continues to do so to this day, as part of the assessment of TOEFL speaking during the real test. So, I have a relationship with ETS and we use SpeechRater on My Speaking Score to deliver exactly that fast feedback that I was sorely lacking in my transition from my university days in South Korea to online. The aim is clear - to help people get a high TOEFL speaking score without giving my own subjective opinion as to one’s speaking performance.”

* What is ETS?

ETS stands for Educational Testing Service. It is a US-registered non-profit organisation.

Founded in 1947, and now a leader in educational research, ETS is the world’s largest administrator of standardised tests. These tests include TOEIC and, of course, TOEFL.

2. When you set the wheels in motion for My Speaking Score, didn’t you initially feel your own opinions on students’ speaking skills were becoming devalued?

“It wasn’t my intention to devalue my own opinions. I merely strived to offer students a means to learn in a much more self-guided way, and for them to get that instant feedback so they could make corrections in a way that benefited them specifically in a TOEFL speaking context.

I see a lot of EFL/ESL instruction techniques creeping into TOEFL speaking preparation classes. TOEFL speaking teachers don’t really need to prepare classes that revolve around skills such as expression, tone, persuasion and negotiation because they’re not measured in the TOEFL speaking context. Using an automated scoring engine and other reports we generate on My Speaking Score allows a user to focus only on what matters. So, everything’s much more data driven and much more specific to the TOEFL speaking context. In a nutshell, that’s what My Speaking Score is about - take the opinion out, put the facts in, and speed up the feedback.”

3. In recent times, I’ve realised that I need to find my own teaching niche. I’ve actually begun to home in on the CPE speaking exam and I’m quite well up on the CPE speaking rubrics and what’s expected of students. Do you firmly believe that, without a speech scoring engine and data to hand, even the most experienced examiners for Cambridge English speaking tests and the IELTS Speaking Test rely on a large amount of “guesstimation” when it comes to grading?

“In the world of speaking assessment, under the broad constructs of fluency or pronunciation or language use or even something that’s typically much more subjective like topic development or logic or discourse coherence, it's hard to imagine that a human assessor, or a human teacher, relies on very much factual information, right?

Consider the very informal and traditional ways to assess someone's speaking proficiency, which we do all the time. We say things like “that guy's English is really good”. Teachers I know often made generalisations like “we need to work a little bit on your pronunciation” or “we need to improve your vocabulary”. Those types of subjective observations or recommendations, I think are not as effective to the student as they sound to the teacher. A comment like “We really need to work on your fluency” suggests that the teacher is going to take the learner on some type of journey of an unknown length of time. Why? To improve, in some unknown way, an unmeasurable aspect of their communication to align it with the teacher's vision of what a desirable speaking outcome should be.

So in the IELTS world, and other standardised English tests that still rely heavily on teacher assessment, teachers follow a rubric. These are highly trained individuals. However, it's an interesting thought experiment, and I think an effective practice overall, to look at many aspects of speaking as quantifiable measures. So what SpeechRater does, and it's very clever, is it takes a corpus of non-native speech and compares it to native speech in order to observe similarities and differences. For example, the frequency and distribution of pauses that occur as a native speaker is speaking can serve as a template for fluency for non-native speakers. Then, we can start measuring those gaps. Therefore, by quantifying that type of information, we can visually show a non-native speaker - this is where you are.

In essence, then, the reason that a human or SpeechRater would deem a non-native speaker to have a fluency deficit or an issue with your fluency is because the amount of silence between your words in the middle of your speech is longer than, for instance, 1.4 seconds.”

SpeechRater result for the Pause Frequency dimension

4. What would be your response to teachers who say that AI tools diminish the “human element” at the heart of teacher-student relationships?

“Why do some language teachers see AI as a threat that might render the human element of teaching obsolete? Is that why it's considered unwelcome in some teaching circles? Imagine those flustered teachers: “I'm gonna get replaced by a computer” or “a robot is going to take my job”. Or, is there such a sincere concern that the beating heart of a human being must itself be at the heart of teaching and learning due to other factors that are important to engagement and motivation, like empathy and so on?

Why does AI have a bad reputation when it comes to learning? I was playing around with ChatGPT the other day and it's absolutely astounding. The crux of my argument is - there are people in the world that can't afford to learn with expensive teachers who charge by the hour. At the root of your question is the implication that there’s somehow an inauthenticity to replacing humans in some way. This argument that assessment mechanisms or even automation tools contaminate the teaching and learning experience requires deep scrutiny in my opinion.”

5. Indeed John. Some great feedback has been coming in for my app - Komified - but it’s begun to dawn on me that, without AI, it may be firmly stuck in the past a few years down the line.

“AI is now an omnipresent reality. Let me tell you something important though. My head data scientist, Hakan, who’s Turkish and has a PhD, has made his bones in professional development and, really, the human angle in teaching. He specialises in mentor-mentee relationships and how humans can help each other become better and more proficient practitioners and so on. We recently pitched a presentation on AI in, not only student assessment and problem diagnosis which My Speaking Score does, but also some of the implications it has for self-guided personal development. We tend to think about AI - as a diagnostic tool - from a student's point of view. However, we also need to think about AI from a teacher’s point of view in terms of skill building.

Overall, it's hard to imagine a future where apps like Komified can exist without some type of AI layer.”

6. Let's get back on track then and look at SpeechRater. Have you seen many gaps in this scoring engine? Is there anything you think you could improve further still?

“First of all, SpeechRater is a bit of a black box. It's proprietary technology owned and controlled by ETS. The part of SpeechRater that they put out there for people like me to licence is not the whole piece. However, we don't really know what they're holding back. This is a totally understandable strategic business move on the part of ETS. So, hard to criticise something that you haven't had the opportunity to fully assess. There are a lot of mechanisms and calculations determining the scores within their algorithm that we don't know about. What we do know is that it’s very sophisticated.

In terms of a general critique of AI scoring engines, they're bad at a couple of things. We know that they're not particularly effective when it comes to grammar assessment. Computers are great at certain things. Counting the number of silences between words is a perfect example. So, you have an average of 0.0147 seconds of silence between words. But when it comes to grammatical consistency, especially the kind of grammar produced spontaneously by native speakers, SpeechRater is not super consistent with that type of assessment. I've done a lot of work on this. We're constantly running experiments that deliberately manipulate the output of a speaker. So, creating a 45-second response, for example, without shifting tenses - what does that do to the grammar score?

The other critique I have at the moment concerns topic development. Currently, though I’m sure it’ll change, there's no keyword trapping. There's also no way for the engine to verify whether or not your English is truly spontaneous. So you could read an article out of the New York Times and you would get a score based on that reading.

An average TOEFL speaking question goes something like this. Some people believe that children should be paid to perform household chores, while others think it's a bad idea. What do you believe and why? A standard TOEFL speaking spontaneous response would be a candidate’s opinion on that. Something like: “I think it's a great idea to pay kids to help out around the house for a couple of reasons.” The response could begin in that fashion.

However, you could read an article about the World Cup into the engine. It would give you back a valid score based on the content that you read in. Nevertheless, it wouldn't be able to say you didn't answer the question. This goes back to my point about AI taking over the world and rendering teachers obsolete. I didn't say that. However, you do need that human element. Thankfully, ETS has that human element ever present in their assessment scheme. The flow is that you're in a test centre taking TOEFL speaking. Then, you record your responses and SpeechRater assesses them. The scoring engine then quantifies the responses in the ways that we've touched on. Finally, everything goes to a human rater. And the human rater says, is this person really answering the question?

So, SpeechRater comes back and says “grammar's bad”. And the human rater says, you know what, “grammar's good”. But I can see why SpeechRater made that judgement because of the complexity of the grammar, for instance. So how do you engineer a perfectly valid and reliable scoring engine that doesn’t require any type of human intervention to validate, confirm or correct this AI analysis? Well, you'd need much greater advances in the areas of topic development and grammatical assessment.”

7. In your TOEFL Speaking e-Book*, you wrote that: “Fluency is the feature most likely to determine a successful TOEFL Speaking outcome”. You also mention that the “Quick win” is to boost students’ Speaking Rate to ±170wpm. What kinds of activities should teachers be doing with students to achieve such a feat?

“That's a great question. 170 might be a bit of a reach for some people. The first thing that I believe in is encouraging noticing. This has been at the heart of my methodology throughout my teacher career - when I was in the classroom, when I went online and even today. So you have to say this speaking rate as a proxy for fluency or speaking speed, if I could put it that way, is a great thing that you can help students to notice really quickly. That's why I wrote in my book that it's a “quick win” because you can clock it. On My Speaking Score, we can measure a candidate’s speed. It's a super easy calculation to make. You can even do it manually.

So, then you can tell a student: “here is your speaking speed”. SpeechRater rewards certain features of your speaking that are typically not rewarded, or even frowned upon, in daily life. Like, if somebody just spoke continuously, as I'm doing right now, your eyes would start to glaze over. There's no pausing, no turn taking, right?

So, you get your student to notice where they’re at regarding speaking rate. Here's your current speed, here's where we want to go. It's 150, or it's 170, it's something like that. But the student’s at 112. So what are the things that we can do to bridge that gap? How can we get that student to speak faster in order to get a high TOEFL speaking score?

Well, the first thing we probably want to do is work on that student’s disfluencies. We want to start eliminating some of the “ums” and “ahs” if that's a particular problem. I'm actually learning Italian right now. I find that, when I'm with my teacher, Federico, I'm always searching for something. What's probably happening cognitively is I'm translating in my head. I know what I want to say in English. Indeed, I can express myself very easily in English. However, I'm always trying to bend English-type grammatical patterns to see if they work in Italian. Or, I could just be searching for the right word. Anyway, all kinds of things are interfering with my speed. So, the teacher and student should take stock of the situation. The student should take a deep breath and simplify what they’re trying to say. Once it becomes clear which areas are interfering with the student’s ability to produce coherent, clear, uninterrupted speech, then we're off to the races.

The thing we can do next is to get the student to speak faster without compromising their pronunciation. But there's possibly a trade off there. Imagine the scene. I can get you up to 150 words a minute, but now I can't understand you. So, there could be all kinds of opportunities to work with the student in that way. Still, the 1, 2, 3 of it is to notice it, start fixing the low-hanging fruit, like trying to remedy or correct disfluencies, and then do some activities that will encourage speed. One of the things that works for me really well in Italian is reading out loud at home. So I'll read to my daughter or I'll read to myself and try to speed up. And there are all kinds of activities that any well trained teacher will know. Think of an approach like the backward build up technique.

So, I'm glad you mentioned speaking rate and I'm flattered that you picked that out of my book. One of the things that you want to do with a student very quickly is give them the last slide first. By that, I mean you don't start with all of the theory. In fact, right from the get-go, in lesson one, you say to the student - we're going to get you speaking more quickly. Tell the student - you're going to see within the next 20 minutes your overall TOEFL speaking score. We’re going to go from here to here, and all we're going to do is speed you up 10 or 15 words per minute. It's so encouraging, right? And when you see that line move, that's something that a teacher can't do, right?

Occasionally, a teacher might drip out a compliment at the end of a class. My Federico often tells me “oh, you spoke really well today”. And I'll be thinking, that's so great. But, for a test taker, especially a self-guided test taker who doesn't have access to a Federico, you want to be able to say, wow - look what I just did. The test taker notices a problem area, makes a change, then re-records and, finally, notices the improvement. That's the type of quick win that will really keep a student coming back, and that motivation to improve will self-reinforce itself.”

Steve:

Yes. There’s a lot to think about in terms of the conversation-type classes I have with my students where we also focus on learning vocabulary and collocations. I insist on getting students to create true sentences about themselves and their lives which contain all this lexis. Frankly, I haven’t got my students to ‘notice’, nor really worked on the various types of disfluencies you’ve mentioned. For my students preparing for their C1 and C2 Cambridge exams, perhaps I need to implement a more data-driven approach when it comes to their fluency levels, rather than organising the usual communicative approach-type activities with them.

John:

“One of the things that AI does for good or ill is it quantifies a lot of previously qualitative stuff. And, as a teaching aid, as you're saying, you share a screen with your student and you say, look, here's the line, here's where you are right now in whatever dimension that is. It could be pronunciation, vocabulary depth, speaking rate, pause frequency, or whatever we're calculating on the back end. And we say, our objective today is just to move that line together and here's how we're going to do it. We're going to move your repetitions line from the 54th percentile to the 80th percentile. And we're going to do it in the following way. 1, 2, 3, let's go - record, notice, correction, intervention, intervention. Try again - line moves a little bit. I think that that's a very effective way in certain contexts. It doesn't work for everybody, but in some contexts and for some types of learners - it is the way to go.”

8. I must quote you from your Perfect TOEFL Speaking e-book once again:

“TOEFL Speaking loves structure. Structure is relatively easy to teach. Start with structure.”

How can teachers go about teaching logical discourse coherence to students?

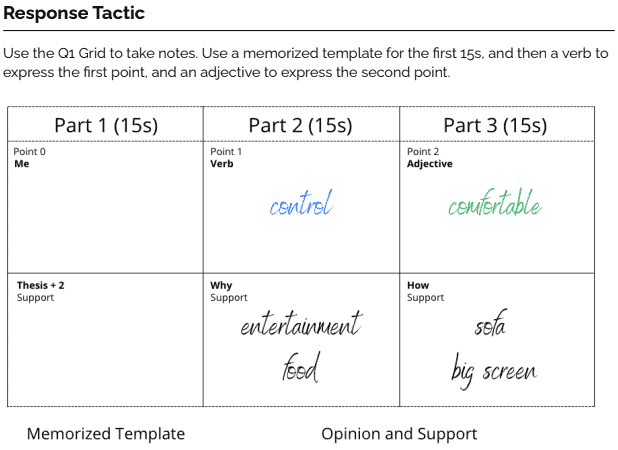

“In order to accomplish most of the objectives that SpeechRater measures, namely those aspects of fluency I talked about earlier, such as pause frequency and speaking, it's much more effective to have a framework to follow. The reason is that you don't know what the questions are going to be on the test. So, if you have a response framework, a general approach that's guiding your preparation activities before students answer the question, the desired outcome is a general response flow that we can then use in any context so that we can just take the inputs from the task and then we can kind of plug them in.

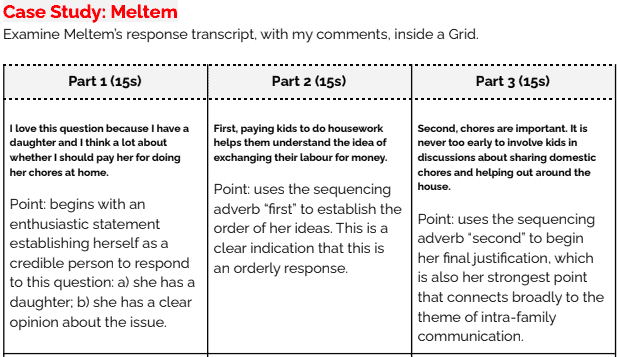

In the book, and, in my days where I was doing a lot of coaching, I used to teach a system called the grid*. The grid is a very simple way to visualise how your response is going to be structured. It also helps with note taking. So in TOEFL speaking, as you may know, candidates get some time to prepare their responses prior to speaking. In question one, candidates get a prompt, 15 seconds’ preparation time, and then 45 seconds to respond. In question two, they have a reading, and then they listen to a conversation about that reading. Next, they have some time to prepare and then they deliver a response. So, during this preparation time, and while they're consuming the listening inputs and reading the passages, candidates can take down notes.

Therefore, this idea of having some type of framework to help test takers structure a response is really valuable. I find that helping students understand how they manage their time while tackling a task helps them to stay on track. This is because it’s very easy for test anxiety to creep in, especially with all their possible worries about their disfluencies which they know SpeechRater is picking up on.

Small mistakes in even one of the tasks can blow up the whole experience for a student. Therefore, applying scaffolding, and training the test taker to use an approach that guides them during the note taking process of the input stage, is the only way to prop up a nervous test taker on test day. So, when they're confronted with a new set of inputs, they can go back to the training that they've received and deliver high scoring responses like they’ve been doing again and again from the security and privacy of their own home. ”

* What is a Grid in a TOEFL Speaking context?

A Grid is a framework that helps students structure notes into a logically connected, high-scoring response. It works well for all four TOEFL Speaking tasks.

You can read more about using a grid in John’s e-Book.

Here are a few visual representations of the strategy:

Source: Healy, J. Perfect TOEFL Speaking (e-Book)

Source: Healy, J. Perfect TOEFL Speaking (e-Book)

Achieving a high TOEFL speaking score

This interview with John Healy was enlightening, eye-opening and very necessary.

For me, it wasn’t just about how TOEFL test takers can get a high TOEFL speaking score.

No. The interview made me realise the boundless opportunities AI affords in both language testing and language teaching.

I sincerely wish John Healy much success in his endeavours.